Because there was very little discussion about that in the community forums like StackOverflow or their own forums.įirst, we'll be discussing why and where do we need Filebeat and Logstash.

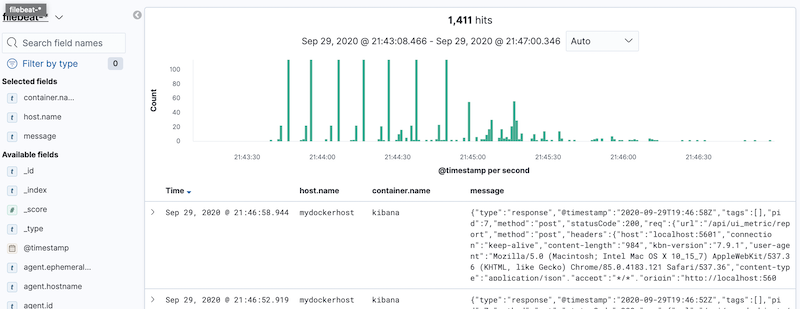

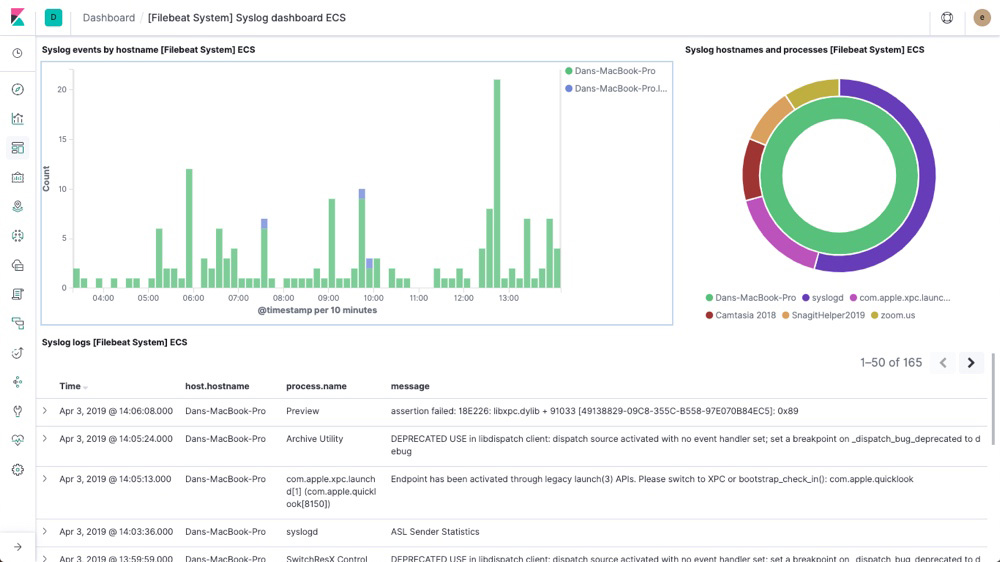

But when I tried to connect the same thing with Elastic Cloud, I faced too many problems. If any of the components doesnt seem to work properly or the log data is not visible as expected on Kibana, errors or issues can be checked by checking the logs for each launched Docker container or service.There are a lot of blogs and video tutorials on how to set up Filebeat and Logstash with self-hosted Elasticsearch. This confirms that Filbeat is able to send the log data to Logstash which then sends it to Elasticsearch and is displayed on Kibana.You should be able to see the log data which was entered in the sample log file above.Save the Index and navigate back to the Discover tab.Create a new Index and provide the index pattern as seen on the Kibana page.Once logged in, navigate to the Discover tab.Once the log file is saved, we will test if the log data is visible on Kibana App.Follow the below steps to test the logs in Kibana: Initialize the swarmįirst step is to initiate the Docker swarm.Run the following command on the instance which is supposed to be the manager node:ġ0.0.0.0 - test_log "GET /admin HTTP/1.1" 301 566 "-" "Mozilla/5.0 (Windows U Windows NT 5.1 en-US rv:1.9.2.3) Gecko/20100401 Firefox/3.6.3"Ģ0.12.12.23 - "GET /favicon.ico HTTP/1.1" 200 1189 "-" "Mozilla/4.0 (compatible MSIE 8.0 Windows NT 5.1 Trident/4.0. Once that is launched I will be explaining how to launch and configure the Filebeat container to ship logs to the Logstash service. Now that we have an understanding of all the separate components as Docker services, we will be launching the full stack with all the three services (Elasticsearch, Logstash and Kibana). I will be explaining the steps to launch the Filebeat container below when launching the whole stack. I have also included the filebeat.yml file in the Github repo.

I am using a custom Filebeat Docker image to configure the output Logstash endpoint and the local log folder paths.The cusom image can be found at. Filebeatįilebeat will be launched as a Docker container in each of the app server separately. We will test this below once launched along with the other services. This cannot be tested separately as it is dependent on the Elasticsearch service. Prepare Individual Components Logstashīelow is the Docker-compose snippet which will launch the Logstash service: In this part, I will be explaining about launching of the Logstash Docker service to the swarm and how to setup the Filebeat container in each of the app servers.The infrastructure required can be launched using the Cloudformation template which I have included in the Github repo.That template can be used to launch a Cloudformation stack which will provision the necessary networking resources and the Instances.Once the instances are launched, latest Docker need to be installed on the instances and the Docker service need to be started. I have explained the overall architecture of this setup in part 1 of the series.Below is the architecture for reference. This will be launched as Docker container in each of the app server where the logs have to be monitored and the log data has to be sent o the Logstash service to be analyzed by the kibana service. Filebeat: This will be acting as a shipper which will forward log data to Logstash endpoint.For our scenario Logstash will process log data sent by File beat Logstash: This is an open source data processing pipeline which will process log data from multiple sources.The code files can be found in the below Github repo: Log Analysis Componentsīelow are the components which we will be launching or configuring through the below steps. If any other custom settings are needed, define the parameters in the config file and rebuild the images from the Dockerfiles.īelow are the Pre-requisites which will be needed to be installed on the servers or instances where Logstash or Filebeat will be running: I am using custom Docker images for LogStash and Filebeat to customize settings through custom config files for both.I have also included both Dockerfiles and the custom config files in the repo. In this part of the post, I will be walking through the steps to deploy Logstash as a Docker service and launching Filebeat containers to monitor the logs.

This is the 2nd part of 2-part series post, where I am walking through a way to deploy the Elasticsearch, Logstash, Kibana (ELK) Stack. Deploy an ELK stack as Docker services to a Docker Swarm on AWS- Part 2

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed